Effective compression is about finding patterns to make data smaller without losing information. When an algorithm or model can accurately guess the next piece of data in a sequence, it shows it's good at spotting these patterns. This links the idea of making good guesses—which is what large language models like GPT-4 do very well—to achieving good compression.

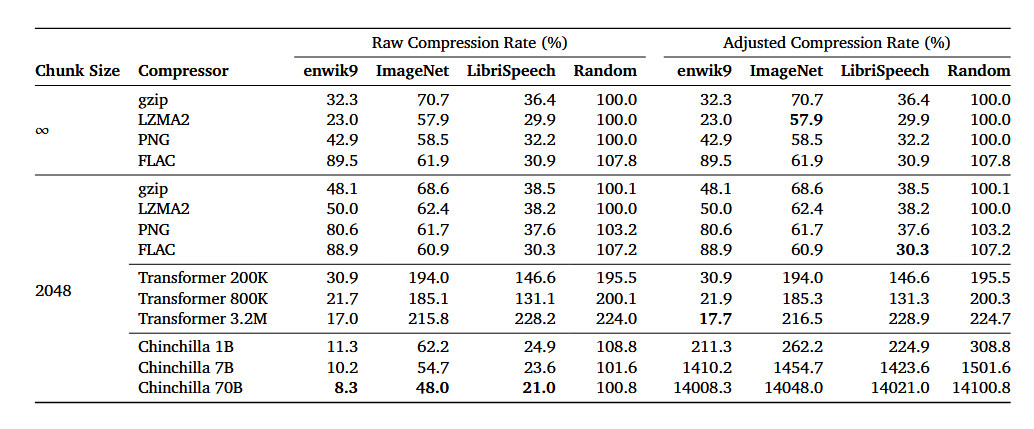

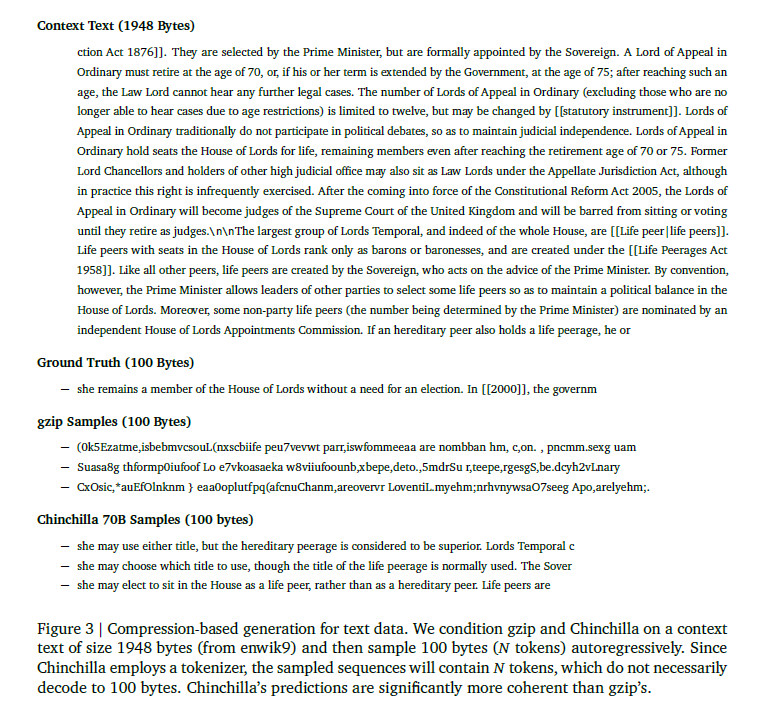

In an arXiv research paper titled "Language Modeling Is Compression," researchers detail their discovery that the DeepMind large language model (LLM) called Chinchilla 70B can perform lossless compression on image patches from the ImageNet image database to 43.4 percent of their original size, beating the PNG algorithm, which compressed the same data to 58.5 percent. For audio, Chinchilla compressed samples from the LibriSpeech audio data set to just 16.4 percent of their raw size, outdoing FLAC compression at 30.3 percent.

In this case, lower numbers in the results mean more compression is taking place. And lossless compression means that no data is lost during the compression process. It stands in contrast to a lossy compression technique like JPEG, which sheds some data and reconstructs some of the data with approximations during the decoding process to significantly reduce file sizes.

The study's results suggest that even though Chinchilla 70B was mainly trained to deal with text, it's surprisingly effective at compressing other types of data as well, often better than algorithms specifically designed for those tasks. This opens the door for thinking about machine learning models as not just tools for text prediction and writing but also as effective ways to shrink the size of various types of data.

Over the past two decades, some computer scientists have proposed that the ability to compress data effectively is akin to a form of general intelligence. The idea is rooted in the notion that understanding the world often involves identifying patterns and making sense of complexity, which, as mentioned above, is similar to what good data compression does. By reducing a large set of data into a smaller, more manageable form while retaining its essential features, a compression algorithm demonstrates a form of understanding or representation of that data, proponents argue.

Loading comments...

Loading comments...